What began as a personal ambition to understand GenAI end to end, not only as a user but as a quant and actuary working through every layer, has now turned into a full application with its first release.

Much like the early race to the moon, the goal was clear but ambitious:

- reliably produce technical and structured reporting

- integrate data and validation into the workflow (up and downstream)

- select the right model for the right job (respect licencing)

- reduce deployment hurdles and improve privacy options

“We choose to go to the Moon in this decade and do the other things, not because they are easy, but because they are hard; because that goal will serve to organize and measure the best of our energies and skills, because that challenge is one that we are willing to accept, one we are unwilling to postpone, and one we intend to win, and the others, too.”

— President John F. Kennedy, Rice University, September 12, 1962

And just like the engineers and astronauts who needed every minute for what mattered most, doctors should not be sweating over routine memos. Your mission is the patient, not the paperwork.

Why reliability matters

The Memo Generator app was built with one core commitment: users do not train models with their private data. Privacy and industry standards must be guaranteed by design. Anyone building their own path into AI should consider this early, so practitioners rely on safety, redundancy and transparent logic instead of learning from avoidable failures (Disclaimer: I am not indicating that Apollo’s failures were avoidable, but rather showing I learn from them).

What the app delivers today

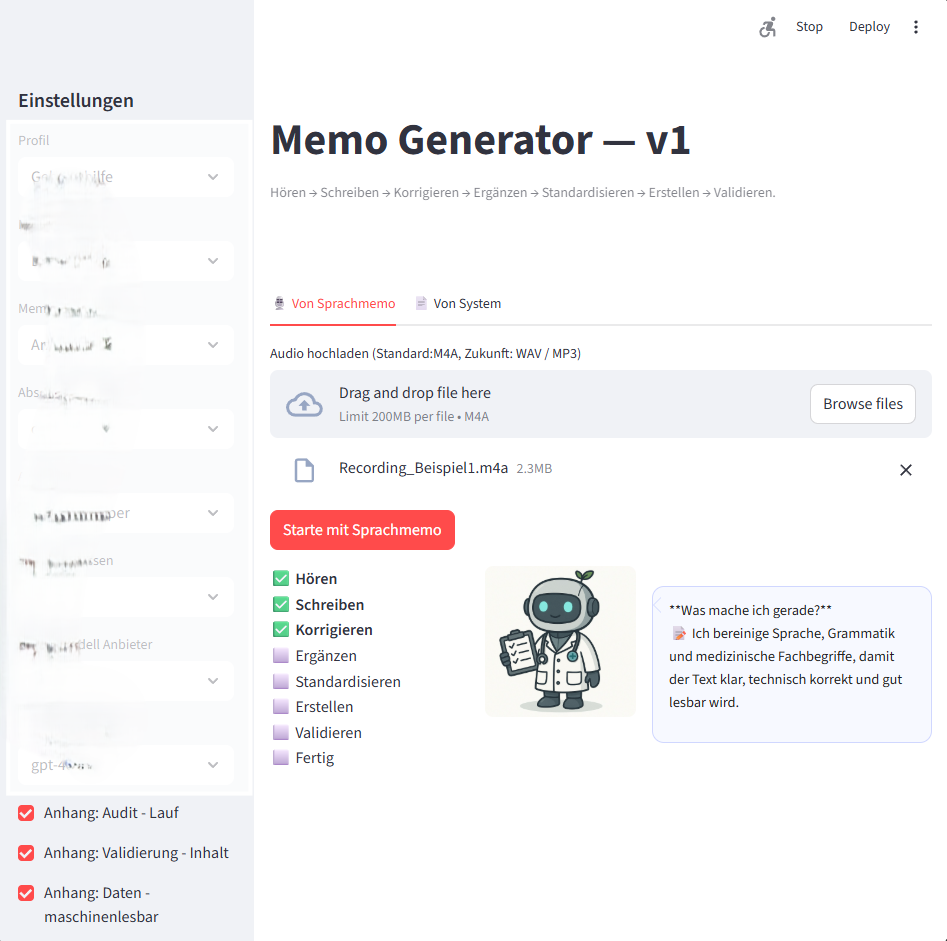

The system takes a spoken medical memo or structured data from standard APIs and turns it into a validated, structured clinical report. It combines dynamic auditing, glossary-based standardisation and multi-format output (HTML, DOCX, PDF, JSON). The interface is transparent at every step. Model choice, performance settings, languages and translations are user options. Audit logs cover operations and safety; validation reports address technical, linguistic and content-related aspects.

Here is a first glimpse of the interface (German example):

Current capabilities include

- spoken memo ingestion

- structured API inputs

- validation and auditing

- glossary logic

- multi-format output

But why stop here, when the ground for future agentic services and processes across settings, tasks and domains is prepared? This my personal mission to Mars, and I am confident I can land this until Christmas.

Next steps

The project continues with a clear roadmap.

- Provide a REST API so the full system and the atomic services integrate seamlessly into any local environment.

- Introduce self-learning capability, train it on bootstrapped reporting data, to make the application more generic, robust and adaptive.

- Generalise a domain-independent framework based on the operational foundations established here.

A Swiss approach to AI engineering

Reliability, transparency and control remain at the centre. The intention is not to build another generic service but a precise, local-first reporting engine that practitioners can trust. A rocket that takes the practitioner where they need to go, and a module that brings them back safely.

If you are interested in the lessons learned along the way, or if you are exploring structured reporting and local AI workflows in health, finance or other operational domains, feel free to reach out.

Tags: structured-reporting, swiss-engineering, local-first-ai, precision-ai